Can It Run Crysis? An Analysis of Why a 13-Year-Old Game Is Still Talked About

Every year hundreds of new games are released to the market. Some do very well and sell in the millions, but that lone does not guarantee ascending to icon status. Every once in a while though, a game is made that becomes part of the industry'due south history, and we nonetheless talk about them or play them years afterward they first appeared.

For PC gamers, at that place's ane title that'south well-nigh legendary thanks to its incredible, ahead-of-its-fourth dimension graphics and ability to grind PCs into unmarried digit frame rates. Later on, for providing a decade-plus worth of memes. Join us every bit we take a look back at Crysis and encounter what made it so special.

Crytek's Early Days

Before we dig into the guts and glory of Crysis, it's worth a quick trip back in fourth dimension, to see how Crytek laid downwards its roots. Based in Coburg, Germany, the software evolution company formed in the fall of 1999, with 3 brothers -- Avni, Cevat, and Faruk Yerli -- teaming upwardly under Cevat's leadership, to create PC game demos.

A fundamental early on projection was X-Isle: Dinosaur Island. The team promoted this and other demos at 1999's E3 in Los Angeles. Nvidia was i of the many companies that the Yerli brothers pitched their software to, but they were specially keen on Ten-Isle. And for good reason: the graphics were astonishing for the time. Huge draw distances, coupled with beautiful lighting and realistic surfaces, fabricated it an absolute stunner.

To Nvidia, Crytek's demo was the perfect tool with which to promote their hereafter GeForce range of graphics cards and a deal was put together -- when the GeForce three came out in February 2001, Nvidia used X-Island to showcase the abilities of the menu.

With the success of Ten-Isle and some much-needed income, Crytek could plough their attending to making a full game. Their first project, called Engalus, was promoted throughout 2000, but the team eventually abased it. The reasons aren't overly articulate, but with the exposure generated by the Nvidia bargain, it wasn't long before Crytek picked up some other big name contract: French game company Ubisoft.

This would be about creating a full, AAA title out of Ten-Isle. The finish result was 2004 release Far Cry.

Information technology's not difficult to run into where the technological aspects of X-Isle constitute a home in Far Cry. Once once again, nosotros were treated to huge landscapes, replete with lush vegetation, all rendered with cut edge graphics. While not a hit with the critics (it was a TechSpot favorite nonetheless), Far Cry sold pretty well, and Ubisoft saw lots of potential in the brand and the game engine that Crytek had put together.

Some other contract was drawn up, where Ubisoft would retain full rights to the Far Cry intellectual property, and permanently licenced Crytek's software, CryEngine. This would ultimately, over the years, morph into their own Dunia engine, powering games such as Assassin's Creed II and the next three Far Cry titles.

By 2006, Crytek had come nether the wings of Electronic Arts, and moved offices to Frankfurt. Development of CryEngine continued and their next game project was announced: Crysis. From the very outset, Crytek wanted this to be a engineering tour-de-force, working with Nvidia and Microsoft to push the boundaries of what DirectX 9 hardware could accomplish. Information technology would also somewhen be used to promote the feature fix of DirectX x, but this came nearly relatively belatedly in the project's development.

2007: A Golden Twelvemonth for Games

Crysis was scheduled for release towards the end of 2007 and what a twelvemonth that turned out to be for gaming, specially in terms of graphics. Y'all could say The Elder Scrolls IV: Oblivion started the ball rolling the year prior, setting a benchmark for how an open world 3D game should expect (except for grapheme models) and what levels of interaction could exist achieved.

For all its visual splendor, Oblivion showed signs of limitations when developing for multiple platforms, namely PCs and consoles of the time. The Xbox 360 and PlayStation three were complex machines, and in terms of architectural structures, they were quite dissimilar to Windows systems. The former, from Microsoft, had a CPU and GPU unique to that panel: a triple cadre, in-order-execution PowerPC chip from IBM and an ATI graphics chip that boasted a unified shader layout.

Sony's machine sported a fairly regular Nvidia GPU (a modified GeForce 7800 GTX), but the CPU was anything merely standard. Jointly developed by Toshiba, IBM, and Sony, the Cell processor also had a PowerPC core to information technology, but also packed in eight vector co-processors inside.

With each panel having its ain distinct benefits and quirks, creating a game that would work well on them and a PC was a significant challenge. The bulk of developers generally aimed for a lowest common denominator, and this was typically prepare by the relatively low amount of system and video memory available, as well as the outright graphics processing capabilities of the systems.

The Xbox 360 had but 512 MB of GDDR3 shared retentiveness and an boosted 10 MB of embedded DRAM for the graphics flake, whereas the PS3 had 256 MB of XDR DRAM organisation memory and 256 MB of GDDR3 for the Nvidia GPU. All the same, past the middle of 2007, top end PCs could exist equipped with 2 GB of DDR2 system RAM and 768 MB of GDDR3 on the graphics side.

Where the console versions of the game had fixed graphics settings, the PC edition allowed for a wealth of things to be changed. With everything set up to their maximum values, even some of the best reckoner hardware at the fourth dimension struggled with the workload. This is because the game was designed to run best at the supported resolutions offered past the Xbox 360 and PS3 (such as 720p or scaled 1080p) at 30 frames per second, then fifty-fifty though PCs had potentially better components, running with more detailed graphics and college resolutions significantly increased the workload.

The first aspect of this item 3D game to be limited, due to the memory footprint of consoles, was the size of textures, so lower resolution versions were used wherever possible. The number of polygons used to create the surroundings and models were kept relatively low as well.

Looking at some other Oblivion scene using wireframe way (no textures) shows trees fabricated of several hundred triangles, at nigh. The leaves and grass are simply unproblematic textures, with transparent regions to requite the impression that each blade of foliage is a discrete item.

Grapheme models and static objects, such as buildings and rocks, were made out of more polygons, but overall, more attending was paid to how scenes were designed and lit, than to sheer geometry and texture complexity. The art management of the game was non aimed at realism -- instead, Oblivion was set in a cartoon-like fantasy world, so the lack of rendering complexity can exist somewhat forgiven.

That said, a similar situation can also be observed in i of the biggest game releases of 2007: Telephone call of Duty iv: Modern Warfare. This game, ready in the modernistic, existent globe, was developed by Infinity Ward and published by Activision, using an engine that was originally based off id Tech 3 (Quake III Arena). The developers adjusted and heavily customized it, until information technology was able to render graphics featuring all of the buzzwords of that time: flower, HDR, self-shadowing, dynamic lighting and shadow mapping.

Only as we can see in the epitome above, textures and polygon counts were kept relatively low, over again due to the limitations of the consoles of that era. Objects that are persistent in the field of view (e.g. the weapon held by the player) are well detailed, only the static environs was quite bones.

All of this was artistically designed to be every bit realistic-looking as possible, and so a judicious utilise of lights and shadows, along with clever particle effects and shine animations, cast a sorcerer'south wand over the scenes. Only as with Oblivion, Modern Warfare was all the same very enervating on PCs with all details set to their highest values -- having a multi-core CPU, made a large departure.

Some other multi-platform 2007 hit was BioShock. Set up in an underwater urban center, in an alternating reality 1960, the game's visual design was as cardinal every bit the storytelling and graphic symbol development. The software used for this championship was a customized version of Epic's Unreal Engine, and like the other titles we've mentioned so far, BioShock ran the full gamut of graphics technology.

That'south non to say corners weren't cut where possible -- expect at the graphic symbol's mitt in the to a higher place image (click for full version) and y'all can hands come across the polygon edges used in the model. The gradient of the lighting beyond the skin is fairly abrupt, also, which is an indication that the polygon count is adequately low.

And like Oblivion and Modern Warfare, the multi-platform nature of the game meant texture resolution wasn't super high. A diligent use of lights and shadows, particle furnishings for the water, and exquisite art design, all pushed the graphics quality to a high standard. This was seen repeated across other games made for PCs and consoles akin: Mass Effect, Half Life 2: Episode Two, Soldier of Fortune: Payback, and Assassin'southward Creed all took a similar approach to graphics.

Crysis, on the other hand, was conceived to exist a PC-only championship and had the potential to exist complimentary of any hardware or software limitations of the consoles. Crytek had already shown they knew how to push the boundaries for graphics and performance in Far Cry... merely if PCs were finding it difficult to run the latest games developed with consoles in heed, at their highest settings, would things be whatever better for Crysis?

Everything and the Kitchen Sink

During SIGGRAPH 2007, the annual graphics technology briefing, Crytek published a certificate detailing the research and development they had put into CryEngine two -- the successor to the software used to brand Far Weep. In the slice, they set out several goals that the engine had to achieve:

- Cinematographic quality rendering

- Dynamic lighting and shadowing

- HDR rendering

- Support for multi-CPU and GPU systems

- A 21 km 10 21 km game play area

- Dynamic and destructible environments

With Crysis, the showtime championship to be developed on CryEngine 2, all but one were accomplished, with the gameplay surface area scaled down to a mere four km x four km. That may still sound large, but Oblivion had a game globe that was two.5 times larger in area. Otherwise, though, just look at what Crytek managed to accomplish in a smaller scale.

The game starts on the coastline of a jungle-filled island, located non far from the Philippines. North Korean forces have invaded and a squad of American archeologists, conducting research on the island, accept called for help. You and a few other gun-toting chums, equipped with special nanosuits (which give you super strength, speed, and invisibility for brusk periods of fourth dimension) are sent to investigate.

The first few levels easily demonstrated that Crytek'due south claims were no hyperbole. The bounding main is a complex mesh of existent 3D waves that fully interacts with objects in it -- there are underwater lite rays, reflection and refraction, caustics and scattering, and the waves lap upward and down the shore. It withal holds up to scrutiny today, although it is surprising that, for all the work Crytek put into making the h2o look then proficient, yous barely get to play in it, during the single player game.

You might be looking at the sky and thinking that it looks right. As it should do, considering the game engine solves a light scattering equation on the CPU, bookkeeping for fourth dimension of twenty-four hour period, position of the sun, and other atmospheric conditions. The results are stored in a floating signal texture, to be used by the GPU to render the skybox.

The clouds seen in the distance are 'solid', in that they're non just function of the skybox or uncomplicated practical textures. Instead they are a collection of pocket-sized particles, almost like real clouds, and a procedure chosen ray-marching is washed to piece of work out where the volume of the particles lies within the 3D world. And because the clouds are dynamic objects, they cast shadows onto the residual of the scene (although this isn't visible in this image).

Continuing through the early levels provides more show of the capabilities of CryEngine ii. In the above epitome, we tin see a rich, detailed environment -- the lighting, indirect and directional, is fully dynamic, with copse and bushes all casting shadows on themselves and other foliage. Branches motility every bit yous push through them and the modest trunks tin can exist shot to pieces, immigration the view.

And speaking of shooting things to pieces, Crysis permit you destroy lots of things -- vehicles and buildings could be blown to smithereens. Enemy hiding behind a wall? Forget shooting through the wall, every bit you could in Mod Warfare, just take out the whole thing.

And as with Oblivion, almost anything could be picked upward and thrown almost, although information technology's a shame that Crytek didn't make more of this feature. It would have been really absurd to choice up a Jeep and lob it into a group of soldiers -- the best you could exercise was punch it a scrap and hope it would move enough to cause some damage. Withal, how many games would let you take out somebody with a chicken or a turtle?

Even now, these scenes are highly impressive, but for 2007 it was a colossal jump frontwards in rendering technology. But for all its innovation, Crysis could be viewed as existence somewhat run-of-the-mill, as the epic vistas and balmy locations masked the fact that the gameplay was mostly linear and quite anticipated.

For example, back then vehicle-only levels were all the rage, and so unsurprisingly, Crysis followed conform. After making good progress with the isle invasion, you're put in control of a dandy tank but it's all over very apace. And possibly for the best, because the controls were clunky, and one could become through the level without needing to accept role in the battle.

There can't be too many people who haven't played Crysis or don't know about the general plot, but we won't say too much about the specifics of the storyline. Nonetheless, somewhen you end up deep underground, in an unearthly cave system. It's very pretty to look at, but frequently confusing and claustrophobic.

It does provide a good showing of the engine's volumetric light and fog systems, with ray-marching being used for both of them again. It's not perfect (you can meet a little too much blurring around the edges of the gun) but the engine handled multiple directional lights with ease.

Once out of the cavern system, it's time to head through a frozen section of the island, with intense battles and an another standard gameplay feature: an escort mission. For the rendering of the snow and ice, Crytek's developers went through iv revisions, cutting it very close to some internal production deadlines.

The end result is very pretty, with snow every bit a separate layer on top of surfaces and frozen h2o particles glittering in the low-cal, but the visuals are somewhat less effective than the earlier beach and jungle scenes. Look at the big bedrock in the left section of the image below: the texturing isn't and so hot

Eventually, you're back in the jungle and, sadly, nonetheless some other vehicle level. This time a minor scale dropship (with articulate influences from Aliens) needs an emergency pilot -- which, naturally, is you (is at that place anything these troopers can't practice?) and once again, the experience is a brief exercise in contesting against a cumbersome control arrangement and a frantic firefight that you can avoid taking part in.

Interestingly, when Crytek eventually released a console version of Crysis, some four years later, this entire level was dropped in the port. The heavy use of particle-based cloud systems, complex geometry, and draw distances were maybe too much for the Xbox 360 and PS3 to handle.

Finally, the game concludes with a very intensive battle onboard an aircraft carrier, with an obligatory it's-not-dead-simply-yet end boss fight. Some of the visuals in this portion of the game are very impressive, merely the level design doesn't hold upward to the earlier stages of the game.

All things considered, Crysis was fun to play merely it wasn't an overly original premise -- it was very much a spiritual successor to Far Cry, not just in terms of location choice, but likewise with regards to weapons and plot twists. Fifty-fifty the much-touted nanosuit feature wasn't fully realized, as there was generally trivial specific need for its functionality. Apart from a couple of places when the strength style was needed, the default shield setting was sufficient to get through.

It was stunningly cute to expect at, by far the best looking release of 2007 (and for many years after that), but Modern Warfare arguably had far superior multiplayer, BioShock gave the states richer storytelling, and Oblivion and Mass Effect offered depth and lots more scope for replay.

But of course, we know exactly why we're still talking about Crysis 13 years later and it's all down to memes...

All those glorious graphics came at a cost and the hardware requirements, for running at maximum settings, seemed to be beyond any PC around that time. In fact, it seemed to stay that way yr after year -- new CPUs and GPUs appeared, and all failed to run Crysis similar other games.

Then while the scenery put a smile on your face, the seemingly permanently low frame rates definitely did not.

Why And so Serious?

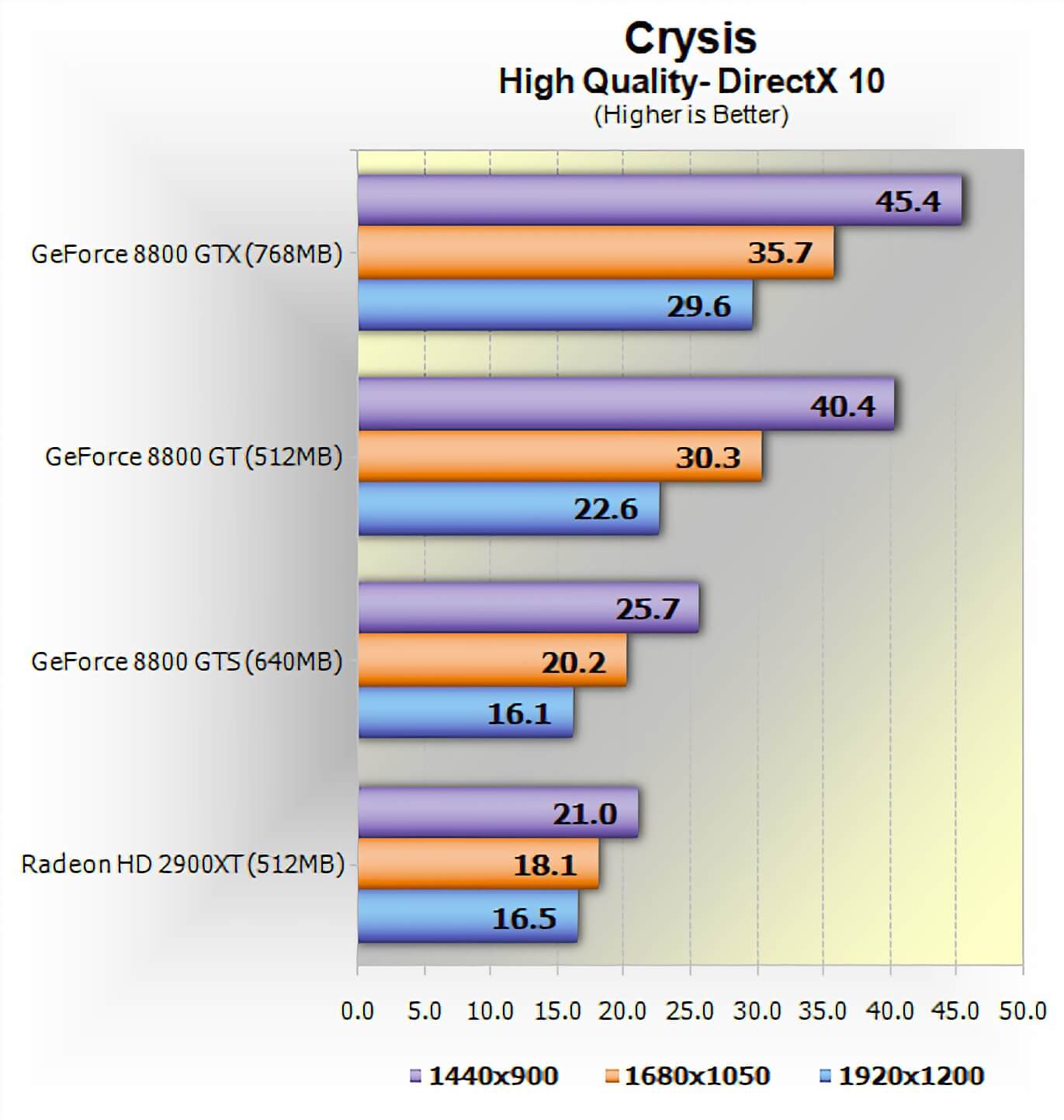

Crysis was released in November 2007, but a one level demo and map editor were made available a month earlier. We were already in the business organization of benchmarking hardware and PC games, and we tested the operation of that demo (and the i.1 patch with SLI/Crossfire after on). We found it to be pretty good -- well, only when using the very best graphics cards at the time and with medium quality settings. Switching things to high settings completely annihilated mid-range GPUs.

Worse still, the much touted DirectX x mode and anti-aliasing did little to inspire conviction in the game'due south potential. During before previews to the press, the less-than-stellar frame rates were already beingness identified every bit a potential event, but Crytek had promised that farther refinement and updates would be forthcoming.

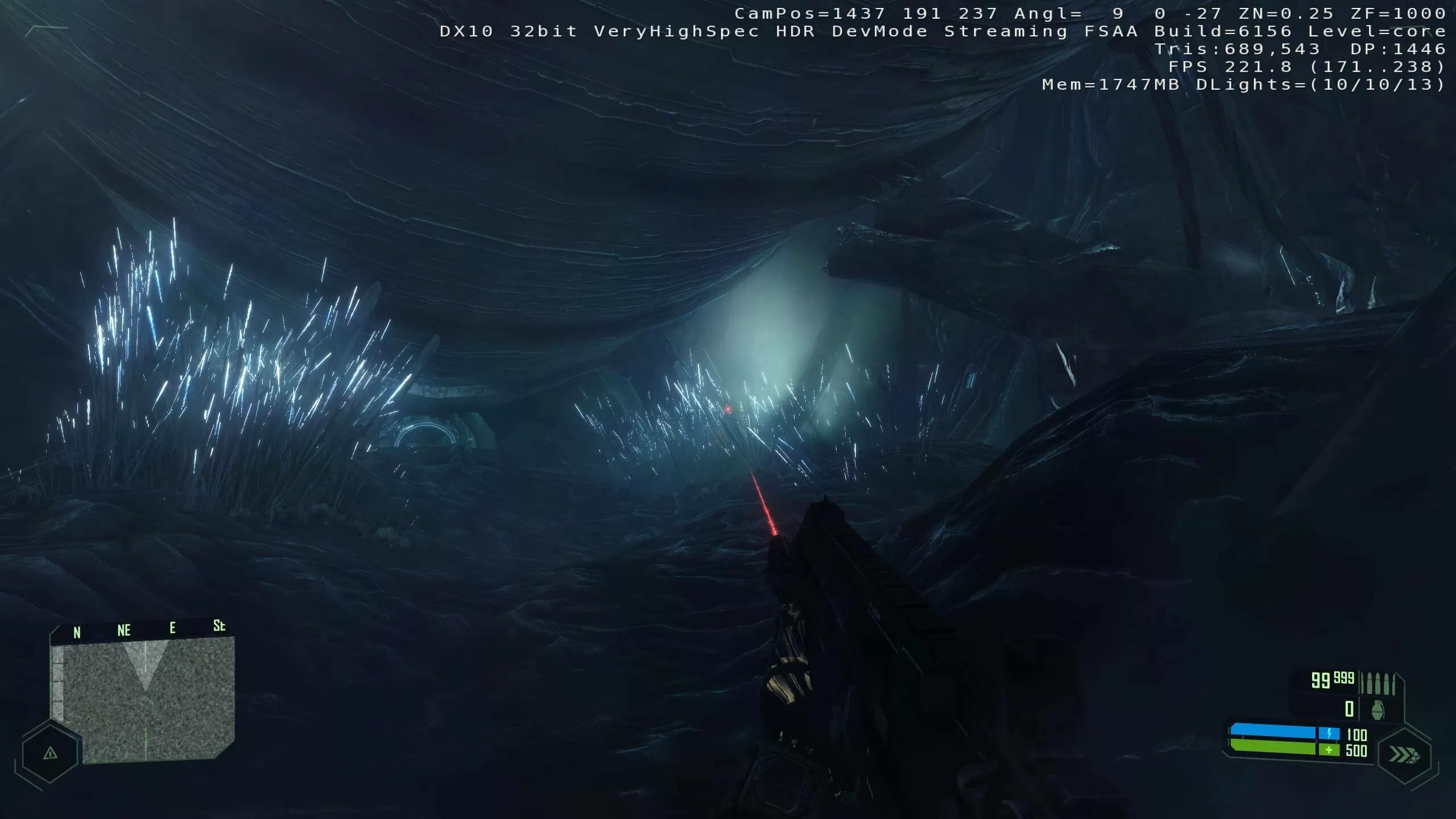

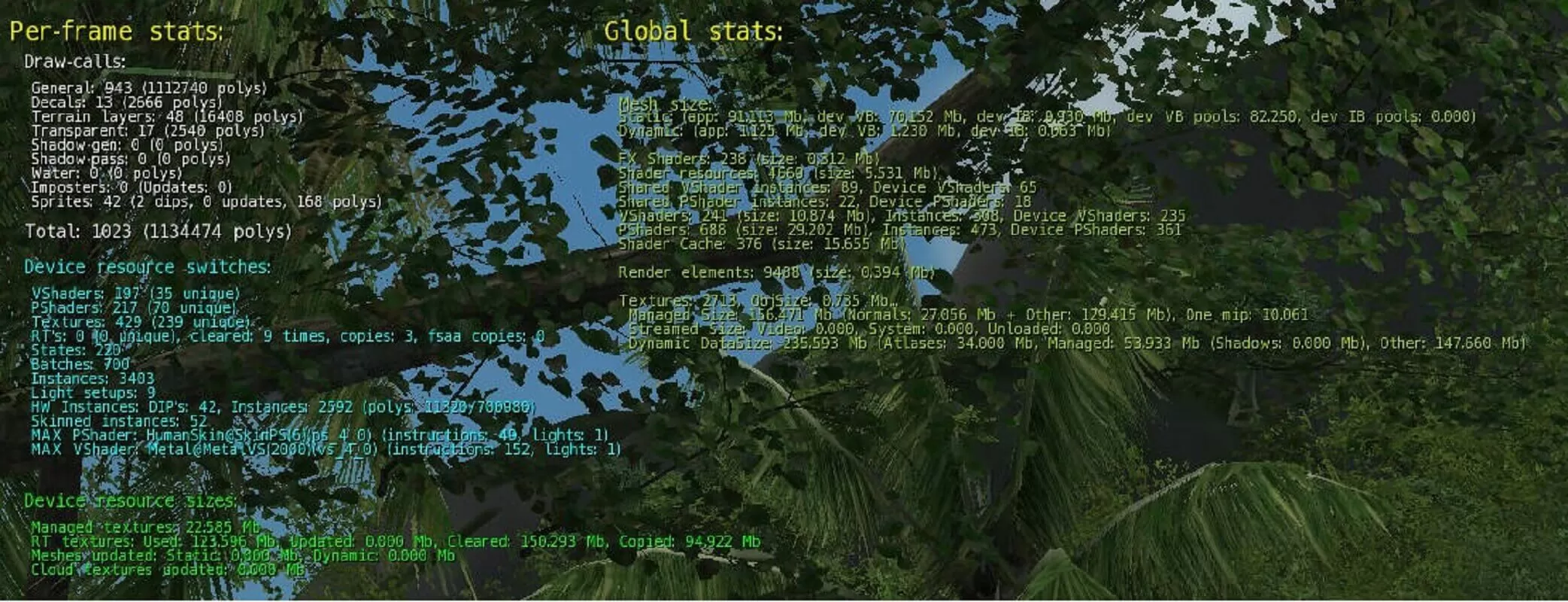

And then what exactly was going on to make the game so demanding on hardware? We can get a sense of what's happening behind the scenes past running the championship with 'devmode' enabled. This selection opens upwardly the console to allow all kinds of tweaks and cheats to be enabled, and it provides an in-game overlay, replete with rendering information.

In the above paradigm, taken relatively early on in the game, you tin see the average frame rate and the amount of system retention being used. The values labelled Tris, DP, and DLights displayed the number of triangles per frame, the amount of draw calls per frame, and how many directional (and dynamic) lights are being used.

First, let's talk about polygons: more than specifically, 2.2 million of them. That'southward how many were used to create the scene, although not all of them will actually exist displayed (non-visible ones are culled early in the rendering process). Most of that count is for the vegetation, because Crytek chose to draw out the individual leaves on the copse, rather than utilise unproblematic transparent textures.

Fifty-fifty fairly simple environments packed a huge corporeality of polygons (compared to other titles of that time). This paradigm from later in the game doesn't show much, as information technology's dark and underground, but there'due south still nearly 700,000 polygons in use here.

Also compare the deviation in the number of directional lights: in the outdoor jungle scene, in that location'due south just the one (i.e. the Sunday) but in this area, there are 10. That might non sound like much, but every dynamic calorie-free casts agile shadows. Crytek used a mixture of technologies for these, and while all of them were cutting edge (cascaded and variance shadow maps, with randomly jittered sampling, to smooth out the edges), they added a hefty corporeality to the vertex processing load.

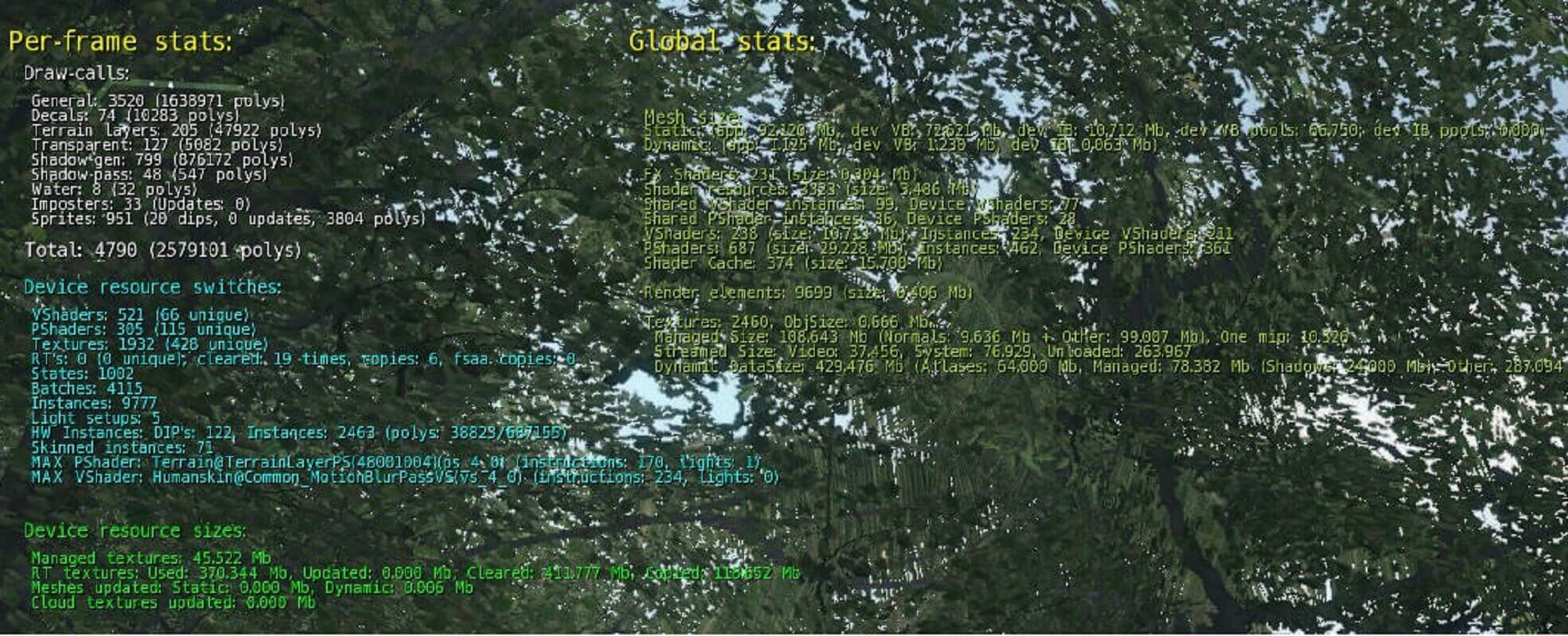

The DP figure tells us how many times a unlike cloth or visual effect has been 'called' for the objects in the frame. A useful console control is r_stats -- it provides a breakdown of the draw calls, likewise as the shaders beingness used, details on how the geometry is being handled, and the texture load in the scene.

We'll caput back into the jungle once again and see what's being used to create this image.

Nosotros ran the game in 4K resolution, to go the all-time possible screenshots, so the stats information isn't super clear. Let's zoom in for a closer inspection.

In this scene, over 4,000 batches of polygons were called in, for 2.5 1000000 triangles worth of surroundings, to apply a total of 1,932 textures (although the majority of these were repeats, for objects such as the trees and basis). The biggest vertex shader was simply for setting up the motion blur effect. comprising 234 instructions in total, and the terrain pixel shader was 170 instructions.

For a 2007 game, these many calls was extremely high, as the CPU overhead in DirectX 9 (and x, although to a lesser extent) for making each telephone call wasn't niggling. Some AAA titles on consoles would soon exist packing this many polygons, just their graphics software was far more streamlined than DirectX.

We tin go a sense of this issue past using the Batch Size Exam in 3DMark06. This DirectX 9 tool draws a serial of moving and color changing rectangles across the screen, using an increasing number of triangles per batch. Tested on a GeForce RTX 2080 Super, the results show a marked divergence in operation across the diverse batch sizes.

We tin't easily tell how many triangles are being processed per batch, just if the number indicated covers all of the polygons used, then it's just average of 600 or and then triangles per batch, which is far as well pocket-size. Notwithstanding, the large number of instances (where an object can be used multiple times, for one call) probably counters this issue.

Some levels in Crysis used even higher numbers of depict calls; the aircraft carrier battle is a good example. In the image below, we tin see that while the triangle count is not as high as the jungle scenes, the sheer number of objects to be moved, textured, and individually lit pushes the bottleneck in the rendering performance onto the CPU and graphics API/driver systems.

A common criticism of Crysis was that it was poorly optimized for multicore CPUs. While nigh superlative-end gaming PCs sported dual core processors prior to the game existence launched, AMD released their quad core Phenom X4 range at the same time and Intel did accept some quad cadre CPUs in early 2007.

So did Crytek mess this up? We ran the default CPU2 timedemo on a modern 8-core Intel Core i7-9700 CPU, that supports upwardly to 8 threads. At face value, it would seem there is some truth to the accusations, as while the overall performance was not too bad for a 4K resolution examination, the frame charge per unit frequently ran below 25 fps and into unmarried figures, at times.

However, running the tests once again, but this time limiting the number of available cores in the motherboard BIOS, tells a different side to the story.

In that location was a clear increase in performance going from 1 to two cores, and again from ii to four (albeit a much smaller proceeds). After that, there was no appreciable difference, but the game certainly made utilise of the presence of multiple cores. Given that quad core CPUs were going to be the norm for a number of years, Crytek had no existent need to endeavor and brand use of annihilation more than that.

In DirectX ix and 10, the execution of graphics instructions is typically done via ane thread (considering the performance hitting for not doing otherwise is enormous). This means that the loftier number of draw calls seen in many of the scenes results in but 1 or 2 cores being seriously loaded up -- neither version of the graphics API really allows for multiple threads to exist used to queue up everything that needs to be displayed.

Then information technology'south not really a case that Crysis doesn't properly support multithreading, equally it clearly does with the limitations of DirectX; instead, information technology'southward the design choices made for the rendering (e.g. accurately modelled trees, dynamic and destructible environments) that's responsible for the performance drops. Remember the image from the game taken in a cave, before in this article? Note that the DP effigy was under 2,000, and so look at the FPS counter.

Beyond the draw call and API issue, what else is going on that'southward so demanding? Well, everything really. Let'southward go back to the scene where we outset examined the draw calls, simply this time with all of the graphics options switched to the lowest settings.

Nosotros can see some obvious things from the devmode data, such every bit the polygon count halving and draw calls cut to almost 25% the corporeality when using the very high settings. But notice how in that location are no shadows at all, nor whatever ambient occlusion or fancy lighting.

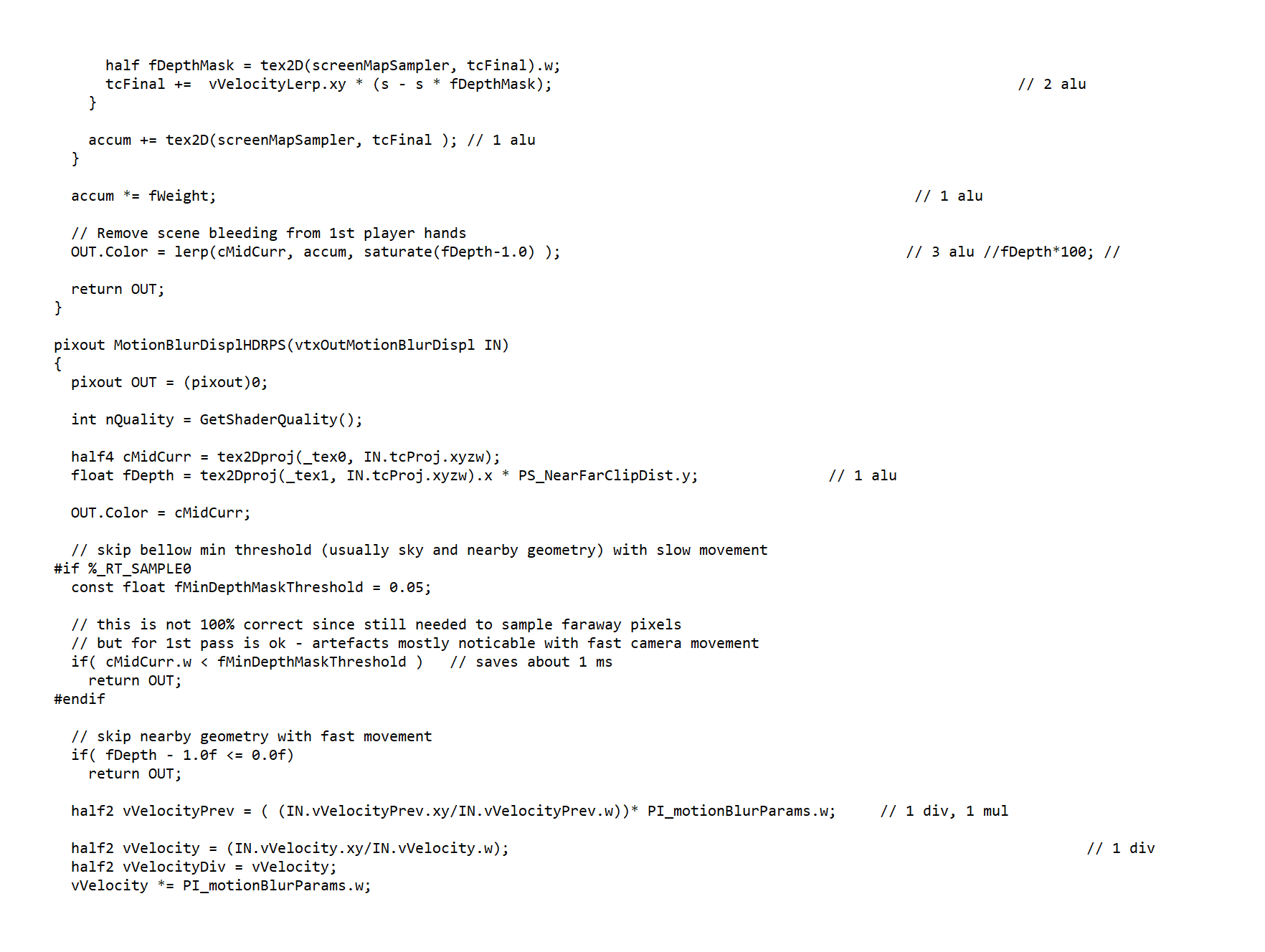

The oddest alter, though, is that the field of view has been reduced -- this simple play a trick on reduces the amount of geometry and pixels that have to exist rendered in the frame, considering any that fall out of the view get culled from the process early on. Digging into the stats again shines fifty-fifty more light on the situation.

Nosotros tin can see simply how much simpler the shader load is, with the largest pixel shader only having 40 instructions (where previously it was 170). The biggest vertex shader is still quite compact at 152 instructions, but it's only for the metal surfaces.

Every bit you increase the graphics settings, the application of shadows hugely increases the total polygon and draw phone call count, and once into the high and very high quality levels, the overall lighting model switches to HDR (high dynamic range) and fully volumetric; motion blur and depth of field are also heavily used (the old is exceedingly complex, involving multiple passes, large shaders, and lots of sampling).

So does this mean Crytek only slapped in every possible rendering technique they could, without any concern of performance? Is this why Crysis deserves all the jokes? While the developers were targeting the very best CPUs and graphics cards of 2007 equally their preferred platform, it would exist unfair to claim that the team didn't care how well it ran.

Unpacking the shaders from the game's files reveals a wealth of comments within the code, with many of them indicating hardware costs, in terms of instruction and ALU cycle counts. Other comments refer to how a particular shader is used to ameliorate performance or when to use it in preference of some other to proceeds speed.

Developers don't usually make games in such a fashion that they're fully supported by all changes in technology over fourth dimension. Equally before long as one projection has finished, they're on to the next one and it'south in future titles that lessons learnt in the past can be applied. For example, the original Mass Effect was quite enervating on hardware, and all the same runs quite poorly when ready to 4K today, but the sequels run fine.

Crysis was made to be the best looking game that could perhaps exist achieved and still remain playable, bearing in mind that games on consoles routinely ran at 30 fps or less. When it came fourth dimension to move onto the obligatory successors, Crytek clearly chose to scale things dorsum downward and the likes of Crysis three runs notably better than the outset one does -- it's another fine looking game, but it doesn't quite accept the same wow cistron that its forefather did.

Once More Unto the Breach

In Apr 2022, Crytek announced that they would exist remastering Crysis for the PC, Xbox One, PS4, and Switch, targeting a July release date -- they missed a fob hither, as the original game actually begins in Baronial 2022!.

That was speedily followed by an apology for a launch delay to get the game up to the "standard [nosotros've] come to expect from Crysis games."

It has to be said that the reaction to the trailer and leaked footage was rather mixed, with folks criticizing the lack of visual differences to the original (and in some areas, it was notably worse). Unsurprisingly, the announcement was followed by a raft of comments, echoing the earlier memes -- fifty-fifty Nvidia jumped onto the bandwagon with a tweet that just said "turns on supercomputer."

When the commencement championship made its manner onto the Xbox 360 and PS3, there were some improvements, mostly concerning the lighting model, just the texture resolution and object complexity were greatly decreased.

With the remaster targeting multiple platforms all together, it will be interesting to encounter how Crytek embraces the legacy that they created or if they're willing to take the safe road to keep all and sundry happy. For now, the Crysis from 2007 still lives up to its reputation, and deservedly so. The bar it set up for what could be achieved in PC gaming is still aspired to today.

Shopping Shortcuts:

- GeForce RTX 2070 Super on Amazon

- Radeon RX 5700 XT on Amazon

- Radeon RX 5700 on Amazon

- GeForce RTX 2080 Super on Amazon

- GeForce RTX 2080 Ti on Amazon

- AMD Ryzen 9 3900X on Amazon

- AMD Ryzen v 3600 on Amazon

Keep Reading. Explainers at TechSpot

- 3D Game Rendering 101: The Making of Graphics Explained

- L1 vs. L2 vs. L3 Cache

- Wi-Fi 6 Explained: The Side by side Generation of Wi-Fi

- What Is Bit Binning?

Source: https://www.techspot.com/article/2053-can-it-run-crysis-history/

Posted by: stilesfamere57.blogspot.com

0 Response to "Can It Run Crysis? An Analysis of Why a 13-Year-Old Game Is Still Talked About"

Post a Comment